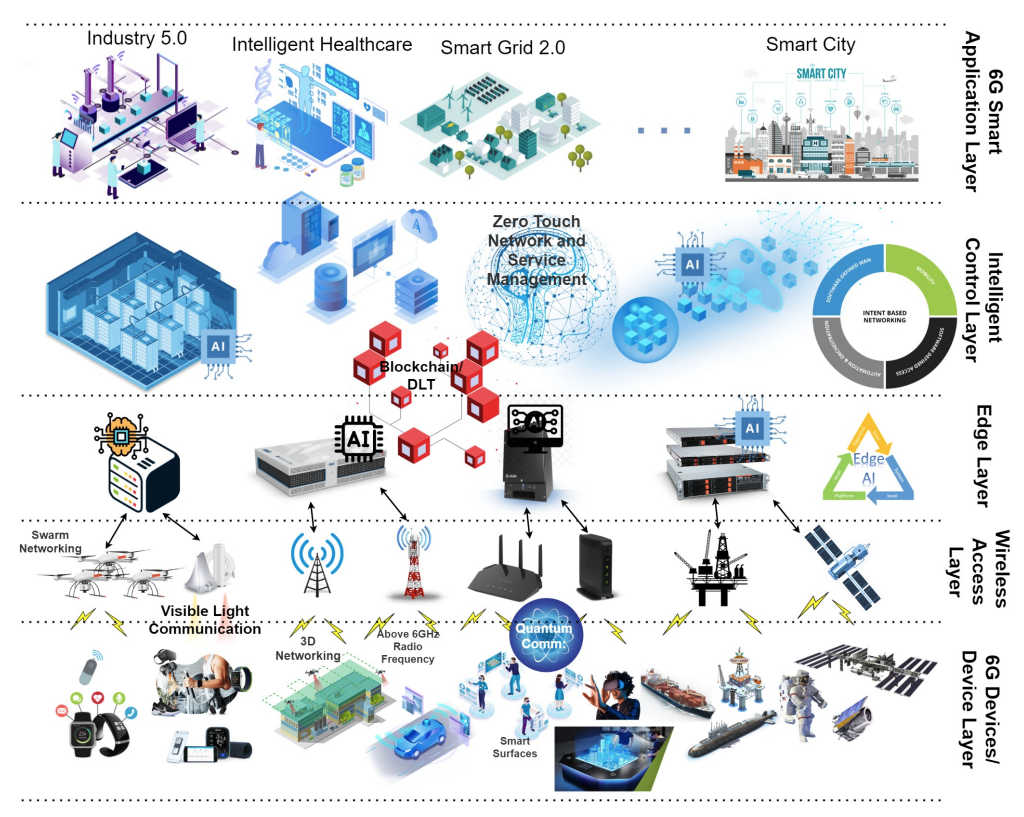

As we stand on the brink of the 6G era, the integration of Artificial Intelligence (AI) into its rollout is not just an option but a necessity. This blog explores the dynamic fusion of AI with 6G.

- BY: Alex Lawrence

- Reading Time: 7 minutes

- Exclusives

Key Value Indicators are an overlooked element in 6G’s development. Get them right and they’ll be transformational to the industry as a framework to talk business and social value as well as technology. 6GWorld dives into more detail with the help of recent 6GSymposium speakers.