Future visions for telecoms networks are rarely unconnected from artificial intelligence. At the recent 6GSymposium Fall edition, AT&T’s EVP Andre Fuetsch described a near future in which private networks, infrastructure sharing and more will lead to disaggregated networks made up of “modular, independent, more granular network components end-to-end,” creating a “fully integrated and autonomous heterogenous network”.

This would be a far cry from the centrally-organised networks where an operator would have end-to-end control from a Network Operating Centre; instead, the focus would be on assembling resources to deliver services where and when required.

David Rogers, CEO of security firm Copper Horse, pointed out one of the major challenges that such a vision faces during the same event.

“How are we going to store all this [data] for analysis and how are we going to process it quickly enough through AI and ML algorithms? Does anybody have the technology, or is going to be working on the technology to be able to do this? Because frankly it doesn’t seem to be working right now. And with lot of ‘AI’, if you pull back the cover it’s the Scooby-Doo thing, right? ‘Oh, look, it’s an ‘if-else’ nested set of statements!’”

Data – Too Much or Not Enough

There is clearly a good distance to go between current capabilities and what would be needed for fully autonomous and modular networks, both in terms of the data and the way it is processed. Nada Golmie, head of wireless at National Institute of Standards and Technology’s (NIST) Communications Technology Laboratory, emphasised one of the dual challenges facing researchers and organisations aiming to solve the problem.

According to her, while network owners possess huge quantities of data, it is commercially valuable and creates a disincentive to share, meaning that researchers in academia, for example, are unable to develop algorithms tailored for the circumstances in real, live networks. Instead, they depend on training data.

This may not provide the right results in reality, especially given the very different markets and physical environments that networks appear in. “Metrology really is an important challenge we have to beat,” Golmie commented during the 6GSymposium. “If we want to have the right algorithms giving us the right results, we need to be able to measure their outputs.”

Meanwhile people operating in live environments face a very different challenge. Bob Everson, Cisco’s Senior Director for 5G Architecture, underlined this when it comes to network security:

“We’re split between full packet capture or this cryptic sort of hieroglyphic that is almost meaningless for some security events. In between lies the real answer […] but we need much richer telemetry and information than we’re capturing today, especially because the bad guys know to move laterally and they know where we don’t have eyes today.”

Avast’s CISO Jaya Baloo concurred that full packet inspection would be unfeasible. “That’s just copying your entire production network to a separate network that only does analysis. That’s ridiculous amounts of data and investment. We try to find ways just to look at flow traffic, and flow traffic is also not straightforward because it’s so dependent on your sampling rate and your ability to process near-real-time. Even that is quite problematic,” she observed.

Beyond ‘Data’?

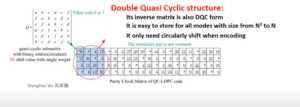

Nageen Himayat, Director of Intel Labs’ Wireless Systems Research, raised one possible solution to the challenge of data ingestion and correlation, highlighting the work in progress to develop systems of semantic communications in time for 6G.

Semantic communications attempts to move beyond the dutiful replication of every bit at every stage of a communication, and instead focus on the ability to convey meaning or accomplish a goal. This would in itself require a level of machine learning to identify the relevant information in a data stream, but could offer efficiencies in data transmission between nodes as well as offering a level of protection against attacks piggybacking on the data stream.

Will the Intelligence be There?

Himayat, speaking during a 6GSymposium debate asking “Will 6G be Ready for Native AI and Machine Learning?”, also underlined the quantity of work being undertaken in distinct areas of AI for telecoms networks: projects to support federated learning across independent networks elements, for example, and to support distributed AI in the network – effectively letting ‘dumb’ IoT end devices use the network’s resources to act as though smart.

With some time still in hand these capabilities could emerge in the right time-frame to support generations beyond 5G. Indeed, we could start to see co-designing of wireless networks and AI to create a more effective outcome.

Jakob Hoydis, a Principal Research Scientist at NVIDIA, proposed that, just as processors are moving from GPUs to domain-specific processors (such as for graphics), so 6G should not be a standard, but a capability. “6G networks should be able to autonomously specialise to a specific radio environment and application,” he suggested.

Hoydis then followed this up by the striking assertion that the whole wireless physical layer could be learned by AI, rendering management of the air interface much more straightforward. The air interface, in this scenario, would be able to adapt to new forms of coding, modulation and optimise itself to the services and devices connected to it.

Among other things, this would reduce the need for global standards, allowing for waveforms, spectrum and more to be specific to particular types of devices and their requirements – such as, for example, resilient waveforms for high-speed mobile devices like cars or trains. In other words, the network would adapt itself to the needs of what uses it – the polar opposite of today’s standards-driven attempt to control how connections are made.

Northeastern University Professor Kaushik Chowdhury summarised the outcome neatly when he asked “are we moving towards AI to enable networks, or networks to enable AI?”

A Business Problem?

While there are still real questions over how we would test or trouble-shoot an autonomous air interface or network – to Golmie’s earlier point – it has been striking to see just how much work is under way today to enable the modular, autonomous elements of Fuetsch’s network vision.

However, with private networks we are already seeing a move away from the traditional centralised network with end-to-end visibility and control of quality – with the carrier as the spider in the heart of the web, weaving and managing. A disaggregated network of networks with modular intelligence will be much more like an ant’s nest. Assuming, for the sake of argument, that networks beyond 5G will be able to master the technology challenges, it will require some very different models of operation, liability and risk management, interworking, data sharing, billing and more. Without those business models in place, or without the desire to put them in place, operators such as AT&T, Verizon or T-Mobile are likely to miss out on the full value of this revolution in networking.

Image courtesy of Nageen Himayat, Intel