There is great interest in building digital twins for industrial applications – indeed, this interview suggests that digital twins might be a key application for the nascent metaverse.

At the same time, there has been much discussion about the need for automation and autonomy within telecoms networks, especially as the infrastructure becomes less centralised.

A key problem is that increasing challenging services will need coordination across this decentralised network, and results may be difficult to predict or optimise. This is something that 5G networks are starting to wrestle with, but which will only become more necessary in 5G Advanced and 6G.

A recent joint research publication from Nvidia and Heavy.ai brings together these concepts, proposing design aspects and a reference architecture for uniting a network’s digital twin with the real-world network.

The authors propose an example of how this may be done using the companies’ existing digital twin capabilities. While this may be taken with a pinch of salt given the interests of the companies involved, it at least points the way towards the practicality of what is proposed.

Twin Networks

As the paper’s authors point out, “the complexity of the sixth-generation (6G) wireless networks will grow due to their scale, multi-vendor components, and the need to support diverse use cases. This trend calls for innovative tools and platforms like DTs [Digital Twins] to facilitate the design, analysis, and operation of wireless networks for 6G and beyond.”

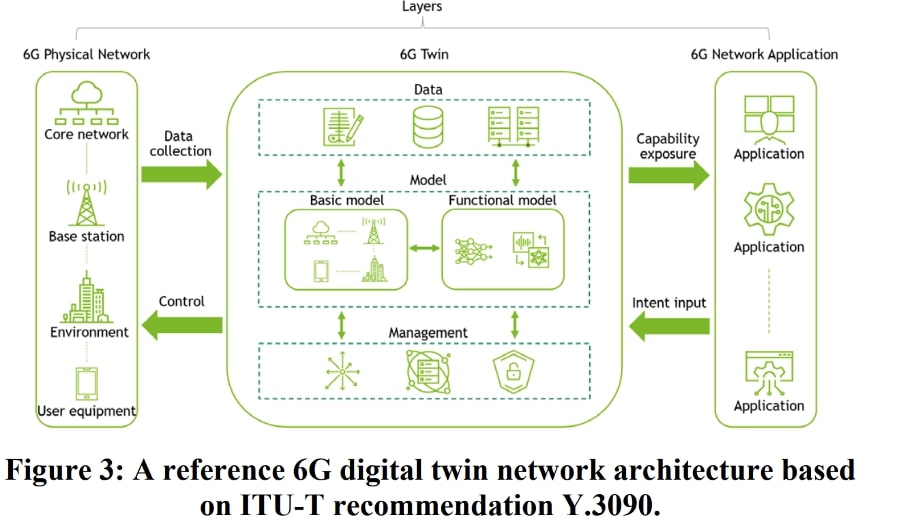

They describe a digital twin network, or DTN, as “a digital replica of the full lifecycle of a physical network. It uses data and models to create a physically accurate network simulation platform, which provides up-to-date network status and predicts future network state.”

Intriguingly, the proposed digital twin is not simply a passive model drawing data from the network and its applications. It “supports two-way communication between the physical network and the virtual twin network to achieve real-time interactive mapping and closed-loop decisions.”

In other words, this would be a means both of tracking performance, and also of modelling and implementing updates and improvements in network performance and related delivery. Indeed, the paper outlines the following uses of such an interactive DTN:

- Network simulation and planning

- Network operation and management

- Data generation by simulation

- AI training and inference

- What-if analysis

The authors make a very striking point that it would be perfectly feasible to construct such digital twins for subsets of a network, such as a cell or a transport network, rather than attempt to build a complete network twin from scratch.

“For example, a DTN responsible for a cell site can be operating at a high level of detail using high-fidelity ray tracing employing multi-bounce specular reflection, 3D diffraction, and diffuse scattering to enable site-specific optimizations in the base station’s physical and medium-access control layers. A DTN in the cloud would be responsible for end-to-end service analysis and network operation including resource management and, for example, AI/ML approaches for network slicing.”

This would enable operators both to test the performance of twins, but also to build out DTNs alongside physical infrastructure upgrades. The benefits would only apply to a subset of the network, of course, but in itself that can offer some valuable lessons for future developments in an incremental rollout.

Architecture

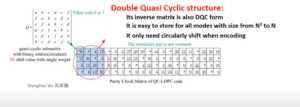

The paper builds on ITU proposals for how network architecture could accommodate a digital twin. Essentially, it would mediate between the physical network and the network applications (such as OAM services or network optimisation), as laid out here.

Within a digital twin, the three domains are intriguing. The data domain acts as the bedrock – drawing live data from the network, storing and managing it. This is something which sounds straightforward.

However, operators are already wrestling with the different kinds of data velocity and accuracy required to understand the network and respond in appropriate time. Particularly as demands for differentiated service levels or demands for network slices develop, pulling together data for the twin in a timely manner will become a discipline in itself.

On top of that sits a model domain, described as “a service-mapping subsystem comprising of models to represent the real-world objects in the 6G physical network based on the collected data. There are two types of models: basic and functional.”

Basic models represent physical network elements and their topology, while functional models analyse activity and develop insights from it, such as traffic analysis, fault detection and so on.

On top of that again is a management domain which is for model creation, monitoring, updating and security management.

While this sounds relatively unexciting, this is really where we get the opportunity for humans to derive insights and take control over a largely autonomous network. By managing what digital twin models are being used, how they operate and what they do, the operator is kept in the loop. Arguably this would be the main point of control for differentiating performance between networks.

A Network of Digital Twin Networks

As laid out, there are a few interesting corollaries from the paper. One is that, if DTNs are developed incrementally – putting them in place as capabilities and network updates allow – operators are likely to end up with a heterogenous set of digital twins, for example with models that are optimised for performance on the radio, core, or transport networks.

The other is that there may be good reasons to have interfaces between DTNs belonging to different network owners, for example between private local networks and national ones; or even for operators to access DTNs on an as-a-service model, upgrading and adding elements on a continuous basis as capabilities evolve.

The paper notes that “it is vital to develop common components, generally applicable frameworks, standardised interfaces, and universal platforms and tools to enable interoperability and extensibility of the 6G DTNs. These developments will gradually evolve into unified tools to build the diverse 6G DTNs on universal platforms hosting data, models, and management functions.”

Clearly there is a huge amount of work to do to make such a situation reality. For the kind of data ingestion and processing required there needs to be both the acceleration for processing in real time, and the means to store and analyse that data as demanded – not to mention still working to reduce energy demands.

As far as open and interoperable interfaces go, we are still short of them in more established fields, much less certification and testing.

The authors note that there are 5G DTNs in existence, such as a city-scale one which Ericsson has built. One swallow does not make a spring; but it does show that there is at any rate some basis to work from towards realising 6G DTNs in parallel with the development of 6G’s network architecture itself.